A mixed-methods research study investigating why enterprise partners avoided a newly launched B2B platform — and what structural, navigational, and workflow barriers were driving them back to legacy tools.

Enterprise partners kept returning to legacy tools even after a new platform launched. Four research-backed findings showed the problem wasn't change resistance — it was structural friction built into the platform itself.

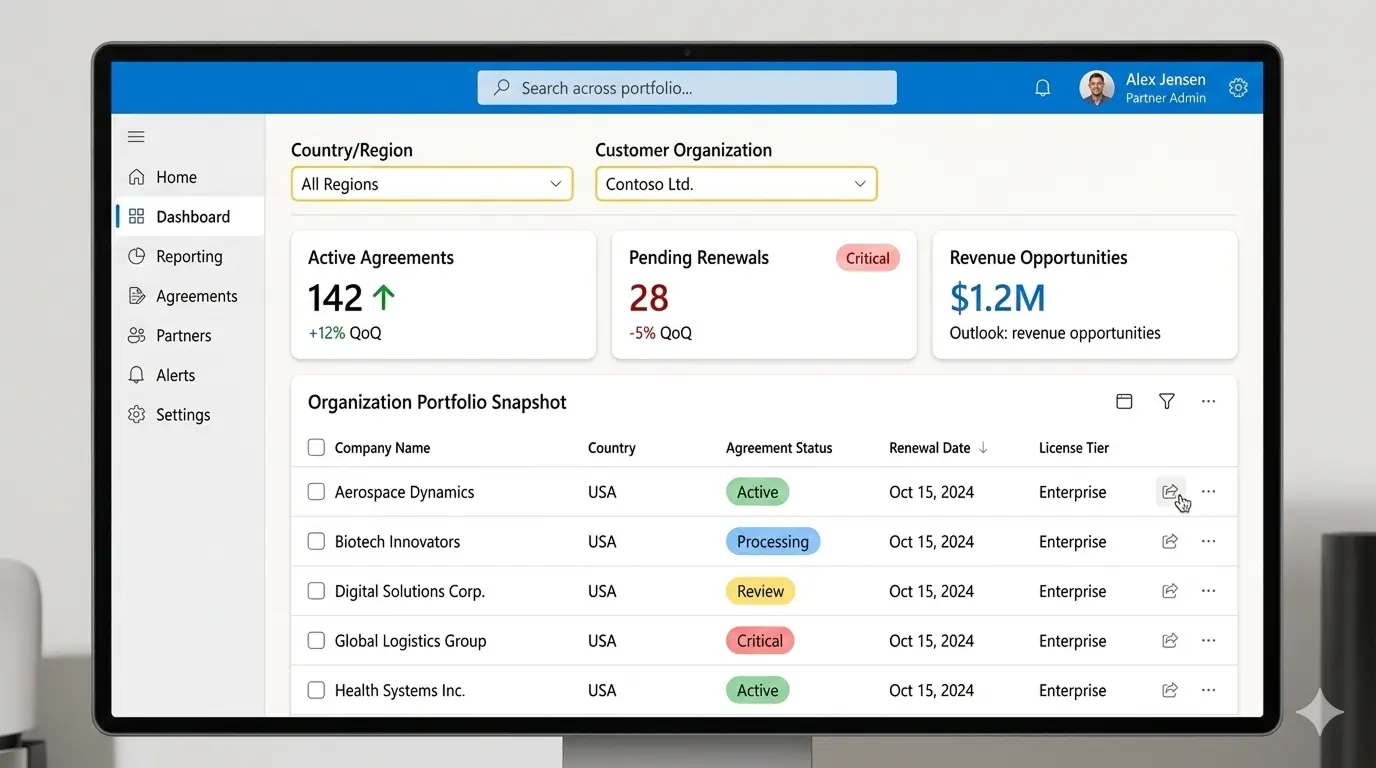

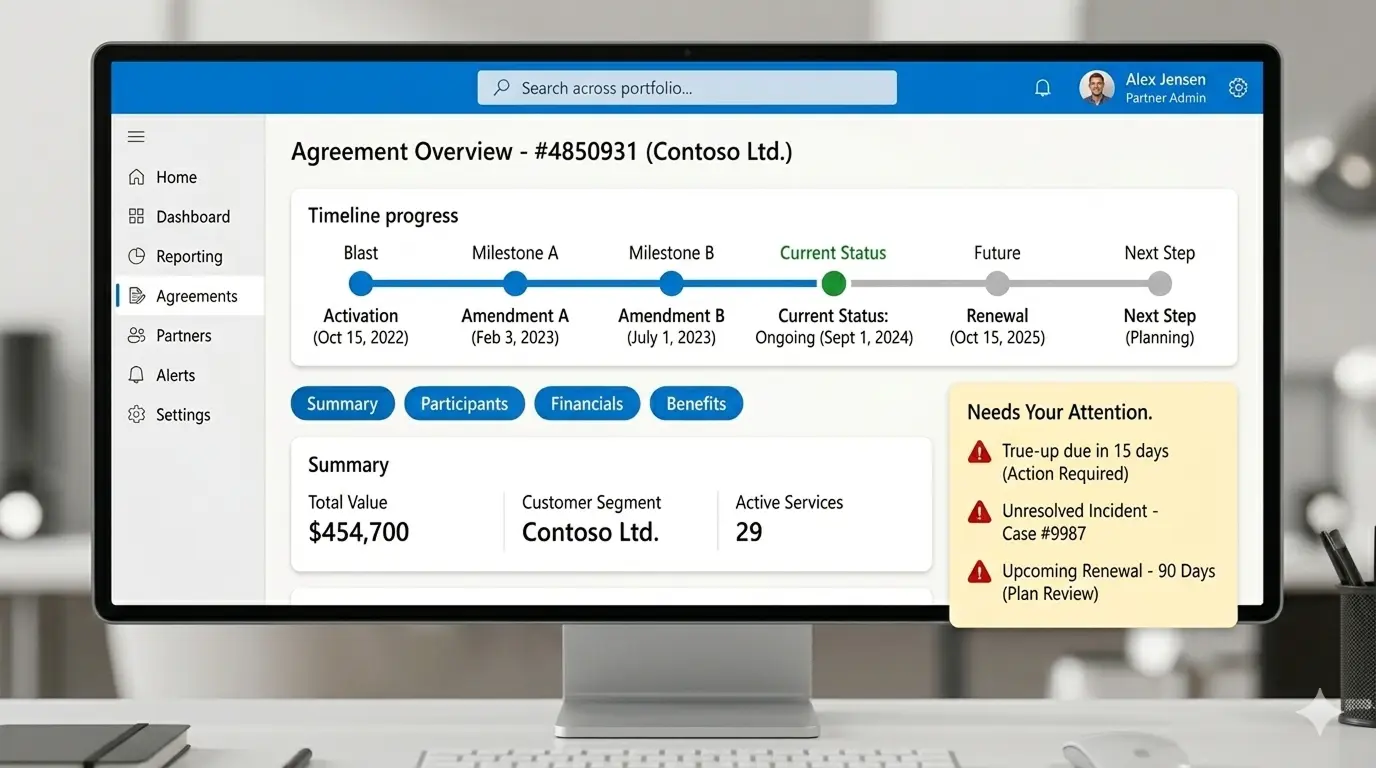

VL Central — the enterprise partner portal used to manage customer agreements, licensing, and financial reporting. AI-generated representation.

VL Central (Volume Licensing Central) is the enterprise partner portal used by resellers and managed service providers to oversee software agreements, manage customer portfolios, and handle financial reporting — all in one place. It replaced a collection of legacy tools that partners had been using for years.

The problem: despite richer functionality, adoption was slow. Partners kept switching back to the old tools. My job was to find out why.

VL Central is the B2B platform used by resellers, managed service providers, and enterprise licensing partners to manage software agreements, track customer portfolios, monitor renewals, and generate financial reports — all within a single interface.

Before VL Central, partners operated across multiple legacy tools: one for agreement management, another for financial reporting, another for alerts. The new platform was designed to consolidate these into one place. In principle, it was a significant improvement. In practice, adoption stalled.

Partners continued using the old tools alongside the new platform — running parallel workflows, cross-checking data, and relying on institutional knowledge built around systems they'd used for years. My engagement was to find out why.

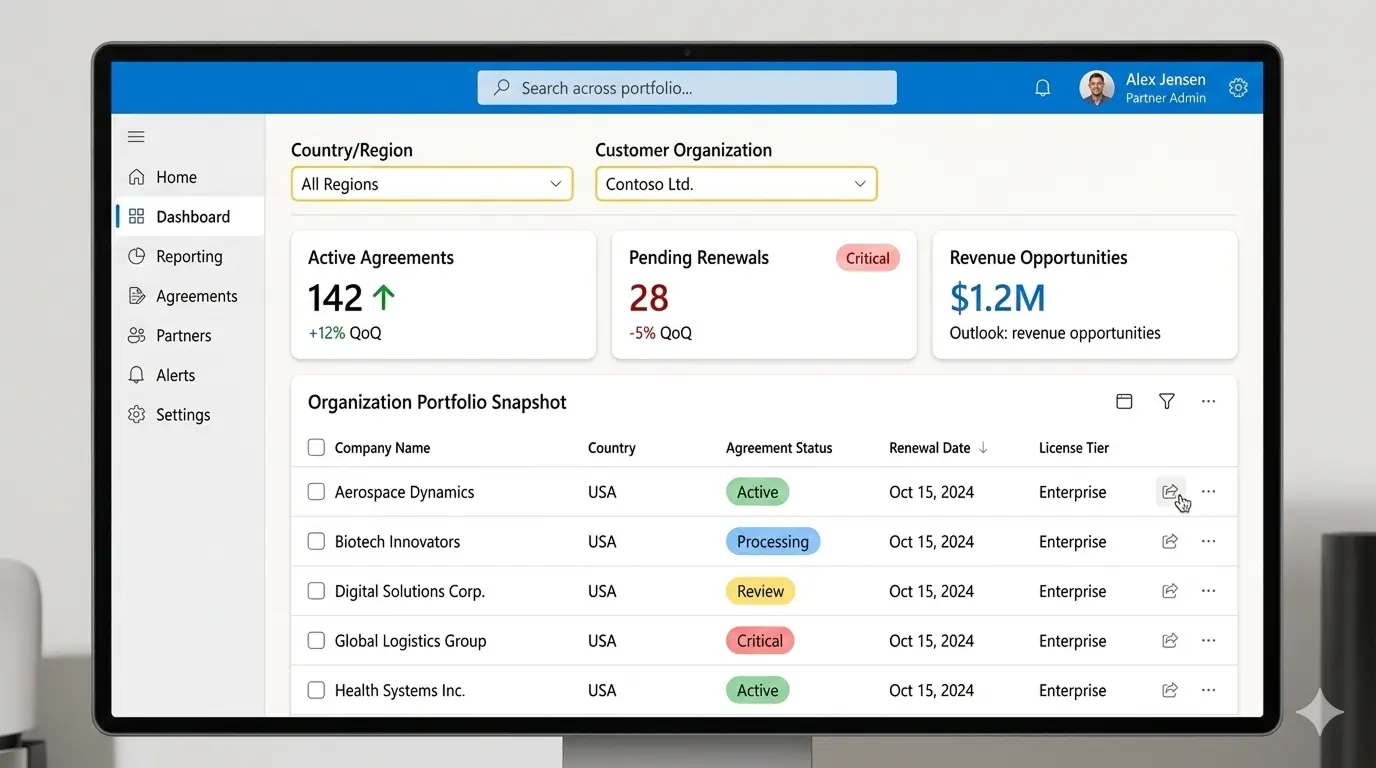

The VL Central partner dashboard — the central hub for managing agreements, licensing, and financial operations. AI-generated representation.

The platform was built by a major enterprise software company. It served thousands of partners globally, managing agreements worth billions in annual software licensing revenue. The stakes for getting the UX right were significant — but adoption remained stuck despite a capable product team and a substantial technical investment.

Despite improved functionality over the legacy tools, adoption was slow. Partners continued using legacy systems even after migration deadlines passed. The internal product team had hypotheses — navigation was confusing, some features were missing — but lacked research evidence to confidently prioritize investments.

I was brought in to investigate three core questions:

This wasn't a question of whether the new platform was better — objectively it was. It was a question of whether it was good enough to disrupt established partner workflows. In enterprise software, that's a very high bar.

I joined as a contract UX researcher for a 6-month engagement. The research scope had been largely pre-defined by the internal product team before I arrived — they needed someone to execute a structured research programme, synthesize findings, and make recommendations actionable for the roadmap.

This context matters for understanding the research choices I made. Certain methods I might have preferred — longitudinal diary studies, co-design sessions — were outside the contracted scope. Others were constrained by timeline, access, or stakeholder readiness. I document these trade-offs explicitly in the Research Design section.

My responsibilities included:

Rather than delivering a single end-of-project report, I shared insights with the product team as they emerged throughout the engagement. Every major research decision involved a deliberate trade-off. Below is the design logic behind each choice — and the alternatives I considered but didn't pursue.

Four distinct barriers explained why partners kept returning to the legacy system — each supported by both interview evidence and behavioral telemetry.

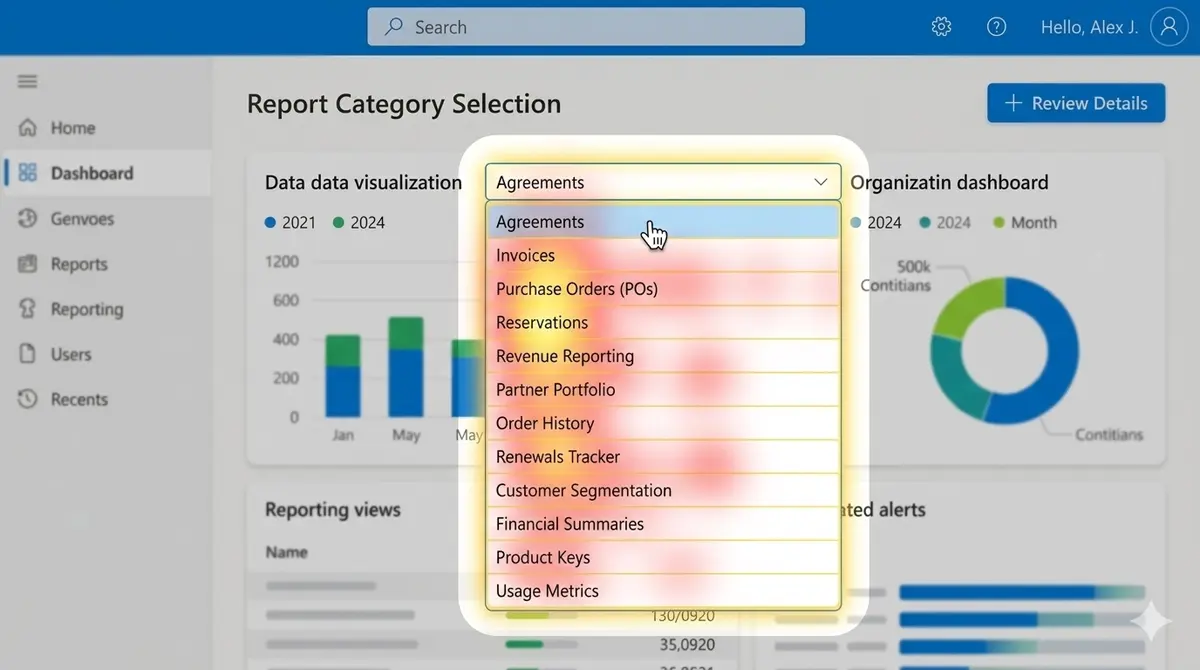

Partners struggled to locate critical reports. The navigation was organized by data type rather than partner workflow, forcing users to browse multiple categories before finding — or giving up on — the report they needed.

The reporting dropdown contained 40+ options, with 14 report names including the word "Revenue" — making differentiation nearly impossible without opening each one individually. Telemetry confirmed this: 74% of active users logged into both the new platform and the legacy system in the same session — browsing the new interface but completing critical tasks in the old one.

The report category selection screen. 40+ options, overlapping names, no grouping by task type. AI-generated representation.

Partners depended on capabilities in the old system that the new platform had not yet replicated. Scheduled report downloads was the most critical gap — cited by 9 of 16 participants as the primary reason they could not fully migrate.

Without it, partners manually exported data every week, then rebuilt reports in Excel. For teams managing large portfolios, this added hours of low-value work every week. The legacy tool completed this in 4 steps. The new platform broke at step 4 with no fallback path.

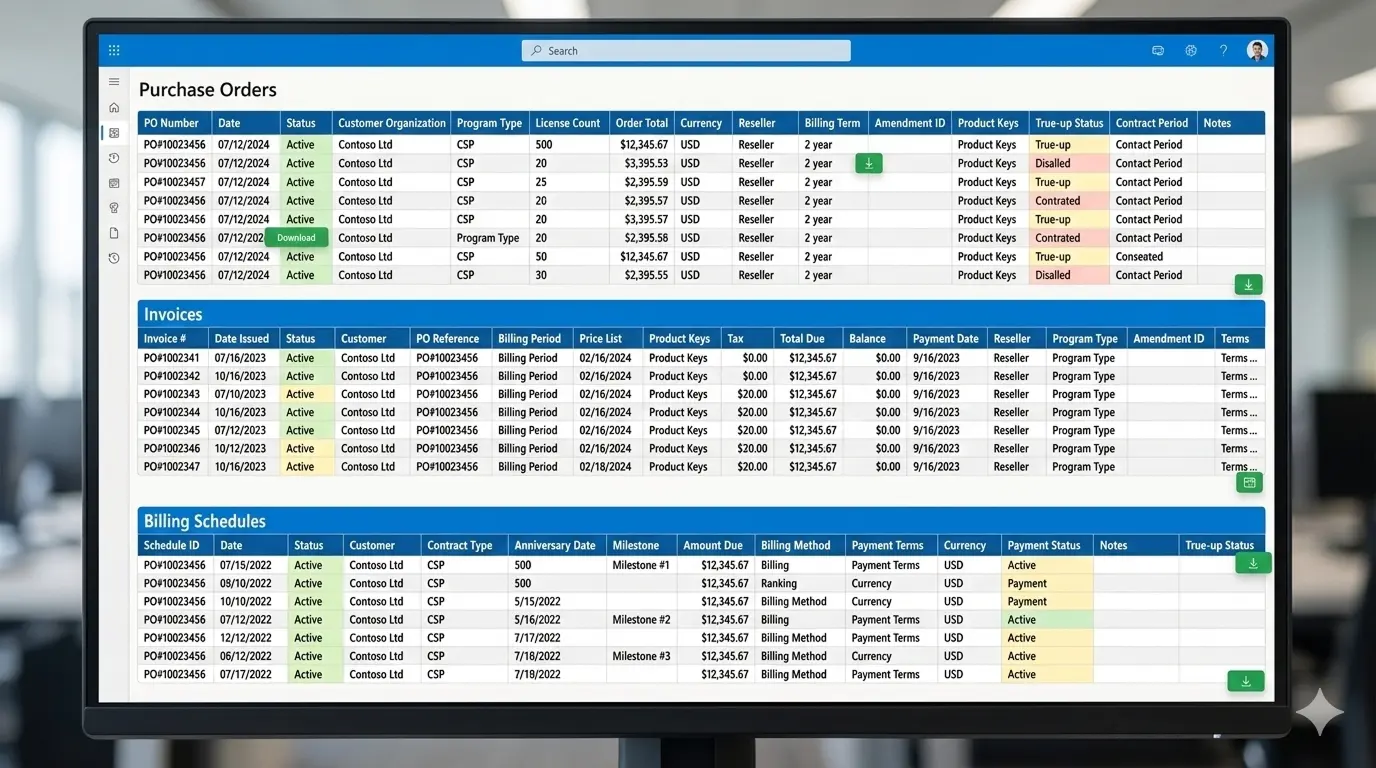

The financial reporting page displayed all data simultaneously with no ability to filter, sort, or prioritise. Every dimension — pending renewals, revenue figures, billing status, historical exports — competed for equal attention.

Partners spent an average of 3.5 minutes on this screen searching for the specific data point they needed. This was the longest dwell time of any page in the platform — not because the data was deep, but because there was no hierarchy to guide attention.

The financial reporting screen — all data dimensions displayed simultaneously with no filtering capability. AI-generated representation.

The platform surfaced alerts for upcoming renewals, rejected orders, and pending true-ups. However, every alert only described what had happened — not what action the partner should take.

Partners who already knew the workflow could act. Partners who didn't simply dismissed the alert. 11 of 16 participants said they routinely ignored alerts because "clicking on them rarely tells you what to do next." HAX evaluation confirmed the platform consistently failed the principle of making clear what the system can and cannot do.

Agreement overview with alert notifications — alerts inform partners of issues but provide no guidance toward resolution. AI-generated representation.

After synthesizing findings, I facilitated a prioritization workshop with product managers using the RICE framework (Reach, Impact, Confidence, Effort). This gave research findings a shared language with product — ensuring insights translated directly into roadmap decisions rather than a backlog of unacted-upon suggestions.

| Problem | Reach | Impact | Confidence | Effort | Score |

|---|---|---|---|---|---|

| Navigation confusion | High | High | High | Medium | 9.2 |

| Alert clarity | Medium | High | High | Low | 8.7 |

| Scheduled report downloads | High | Medium | High | High | 6.8 |

| Financial layout | High | Medium | Medium | High | 6.1 |

| Onboarding guidance | Medium | Medium | Medium | Medium | 5.4 |

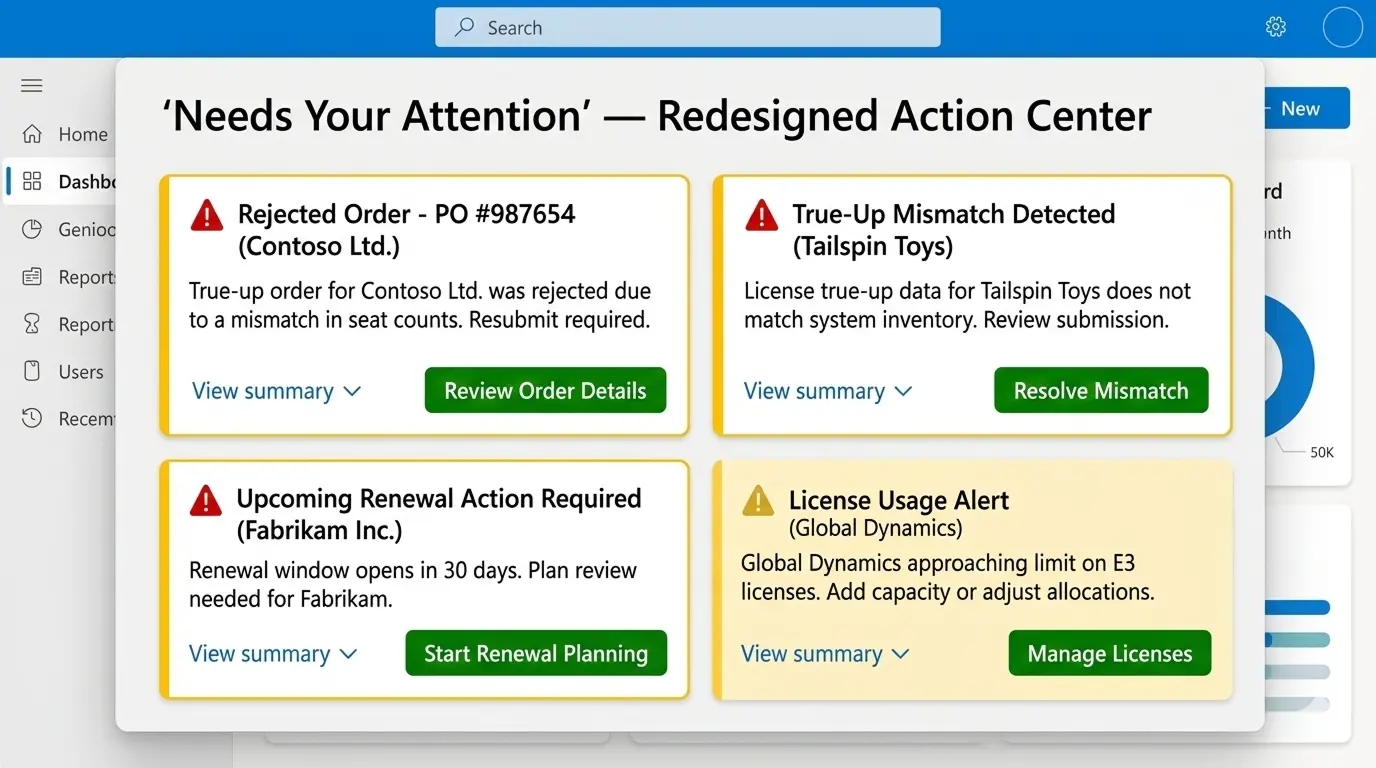

Navigation confusion scored highest overall (9.2), but alert redesign (8.7) was selected for Sprint 14 due to its lowest implementation effort — delivering measurable ROI before longer navigation changes could be scoped and scheduled.

Redesigned alert action center — introducing priority levels, plain-language summaries, and direct action buttons. AI-generated representation.

Beyond adoption metrics, the research process itself had a lasting team impact. Sharing insights continuously — rather than in a single final report — established a habit of evidence-based product decision-making that extended beyond my engagement. The RICE workshop format was adopted by the product team for future prioritization sessions.

In enterprise platform migrations, the biggest challenge is rarely the interface itself. It is the disruption of established workflows. UX research in this context must go beyond usability heuristics — it needs to map how professionals actually do their work, and surface the moments where a new system breaks that rhythm.